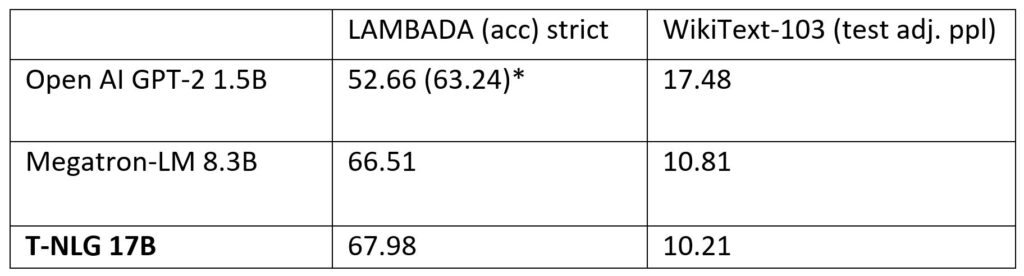

Turing Natural Language Generation (T-NLG) is a 17 billion parameter language model by Microsoft that outperforms the state of the art on many downstream NLP tasks. We present a demo of the model, including its freeform generation, question answering, and summarization capabilities, to academics for feedback and research purposes.

Microsoft Research Blog

About Project Turing: T-NLG is an applied research group that to evolve Microsoft products with deeplearning for text and image processing.

Microsoft explains that T-NLG is a Transformer-based generative language model, which means it can generate words to complete open-ended textual tasks. In addition to completing an unfinished sentence, it can generate direct answers to questions and summaries of input documents. Previously, systems for question answering and summarization relied on extracting existing content from documents that could serve as a stand-in answer or summary, but they often appear unnatural or incoherent. “With T-NLG we can naturally summarize or answer questions about a personal document or email thread”.

Software / hardware

1. NVIDIA DGX-2 hardware setup with InfiniBand connections so that communication between GPUs is faster than previously achieved.

2. Tensor slicing to shard the model across four NVIDIA V100 GPUs on the NVIDIA Megatron-LM framework.

3. DeepSpeed with ZeRO reduce the model-parallelism degree (from 16 to 4), increase batch size per node by fourfold, and reduce training time by three times. DeepSpeed makes training very large models more efficient with fewer GPUs, and it trains at batch size of 512 with only 256 NVIDIA GPUs compared to 1024 NVIDIA GPUs needed by using Megatron-LM alone. DeepSpeed is compatible with PyTorch.

** Advances in research in NLP such as BERT from Google AI, ELMo from Allen Institute, GPT-2 from OpenAI and ULMFiT are usually trained for large amounts of unlabeled text and publicly available from the Internet. OpenAI (nonprofit AI research entity) launched a new language model called GPT-2, in February 2019, and stated that GPT-2 could predict what a user's next words would be after allowing him to pick up a sentence or phrase Apparently the system was so good that it had to be removed from the public domain for fear that it would be misused (that the entity transmitted to the press). Dam Daniel King (one of the tool's parents) has published the OpenAI online feature to prove it. He has published the full version of his text generator in Talk to Transformer. And our test has been to introduce the text: I am writing a book on Artificial Intelligence called "The States of AI". And the result has been this (somewhat disappointing because it speaks of 2015 and some more tone output). (Update Aug 20: OpenAI released a larger model: 774M).Source: Los estados de la Inteligencia Artificial | 🇬🇧The states of Artificial Intelligence at:Chapter 7.2.-Natural Language Processing (NLP) (https://statesaiia.com/)

T-NLG potential applications

The company argues that T-NLG has advanced the state of the art in natural language generation, providing new opportunities for Microsoft and their customers. Beyond saving users time by summarizing documents and emails, T-NLG can enhance experiences with the Microsoft Office suite by offering writing assistance to authors and answering questions that readers may ask about a document. Furthermore, it paves the way for more fluent chatbots and digital assistants, as natural language generation can help businesses with customer relationship management and sales by conversing with customers.

Launching a private demonstration

Additionally Microsoft say that the project is launching a private demonstration of T-NLG, which includes its free-form generation, (answers to questions and summary capabilities), to a small set of users within the academic community for initial tests and comments. If you would like to nominate your organization for a private preview of Semantic Search by Project Turing, send it here:

References:

- Microsoft Research Blog

- Los estados de la Inteligencia Artificial | 🇬🇧The states of Artificial Intelligence. URL: https://statesaiia.com/

Liked this post? Follow this blog to get more.